Demo: Interfacing the Bottlenose™ Depth Camera with an Affordable Microcontroller for Object Detection

This is the first blog in a series of blogs where we will be showing different demos. This demo is focused on interfacing a depth camera with an affordable microcontroller for object detection.

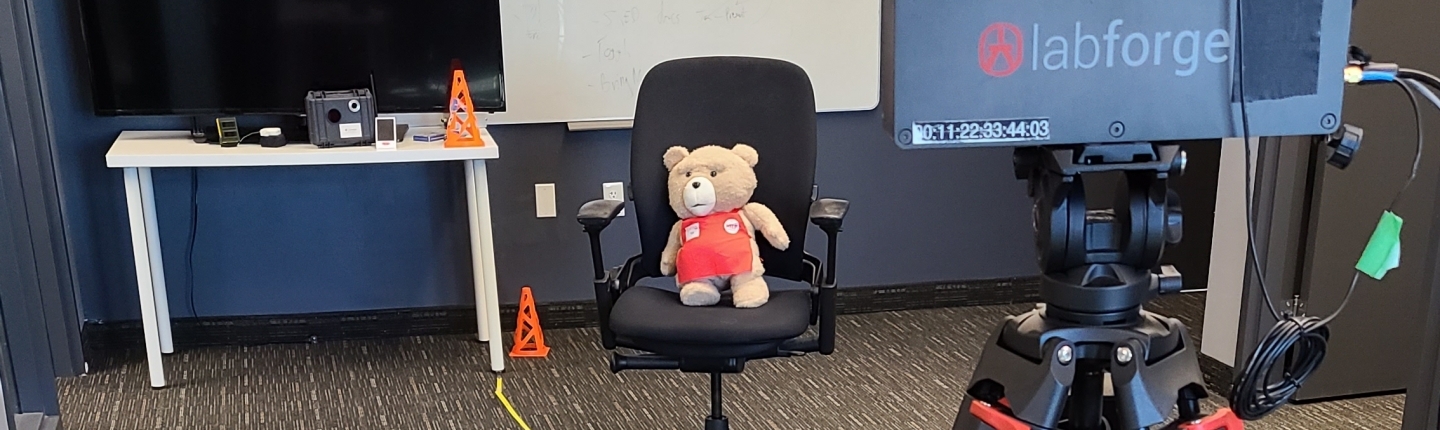

For this demo, our Labforge engineers, Thomas and Martin, took on the challenge to find inexpensive and easy to realize solutions for applications in perception, 3D mapping, and AI. For real-time object detection, they have interfaced a general purpose microcontroller with Bottlenose™. Both being affordable for most applications, with the camera coming in at a fifth of the price of a normal industrial smart camera, a simple, low-cost general purpose microcontroller does the job without any additional computers.

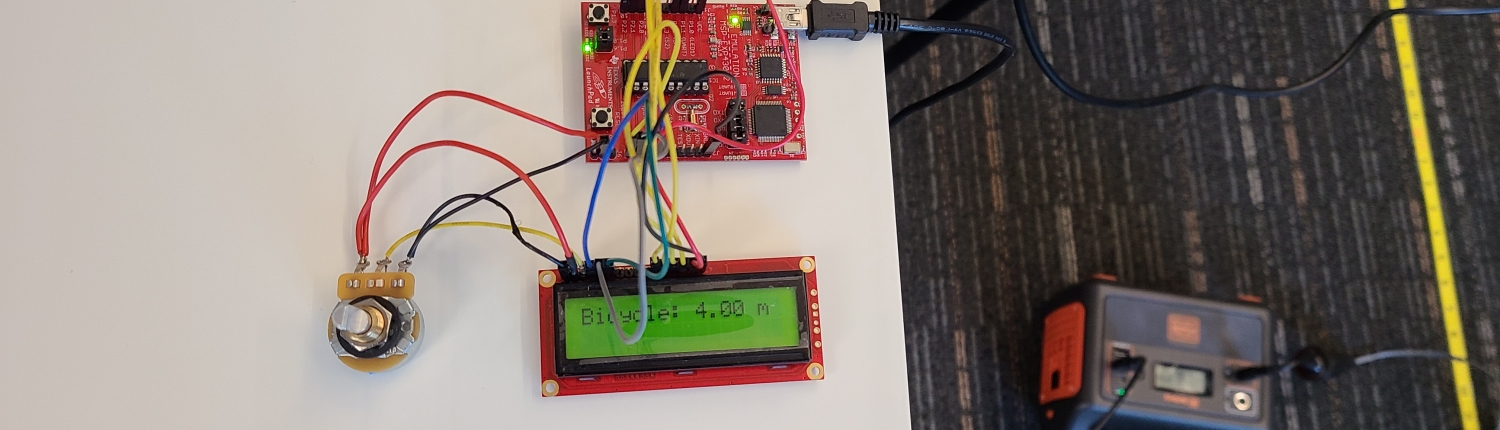

They began by wiring up our latest generation Bottlenose™ cameras over a serial port to a Texas Instruments MSP430™ microcontroller. Each camera has a supercomputer level processing block in the form of a 20.5TOPS ASIC that is capable of advanced computer vision tasks like rectification, object detection, and calculating depth.

This means that even if you don’t have an Ethernet port or a PC to interface with the camera, you can still achieve high-end results by simply using a microcontroller to build your next robot. All known parameters of the computer vision algorithms are brought out in the API.

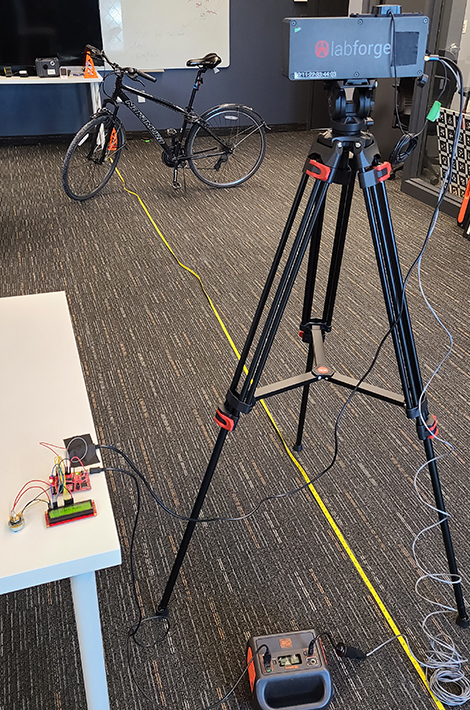

How Bottlenose™ and the Microcontroller are Connected to Achieve Object Detection

The camera and microcontroller are powered by a battery. The connection is rigged up in an hour and an LCD on the microcontroller shows the results. Here we can see that the Bicycle is correctly detected at a distance of 4.00 m:

No PCs embedded or otherwise, were used to interface with the camera. This isn’t even all Bottlenose™ can do. You can also ask it to detect feature points, calculate feature descriptors, match points in time and space, and much more.

Features and Benefits of this Setup

- To achieve object detection, YOLOv3 is running a non-quantized model at 100 MB+ in weights. Other smart cameras run a 5 MB version of YOLO compromising on many of its benefits and engineers end up falling back on the GPU anyways.

- To achieve 3D object detection, SGM is running simultaneously with YOLOv3. This is most likely impossible to do on an embedded GPU.

- Robots and industrial automation equipment does not need to be expensive. Bottlenose™ is 1/5 the price of a normal industrial smart camera.

How Can You Use Bottlenose™?

- The central processor on the robot can be anything that is in stock. You are no longer limited to a single architecture.

- This processor can be very small if the robot has very well-defined use cases. For example, Bottlenose™ could also talk to a PLC and from there toggle a host of actuators like solenoids for ejecting parts on an assembly line.

- If you have applications in mind and would like to know how Bottlenose™ can help, please contact us. We would love to sit with you and hash out ideas. Single unit development cameras will be available from mouser.com. For volume pricing please let us know your estimated needs and timelines.

About Labforge

Labforge is a Waterloo, ON-based technology company that designs, develops, and sells smart cameras. Our cameras are used for automation and robotics.

Nao Takabayashi

Nao Takabayashi Andreas Gücklhorn | Unsplash

Andreas Gücklhorn | Unsplash Nao Takabayashi

Nao Takabayashi

Trackbacks & Pingbacks

ما هي افضل الجامعات الخاصه

[…]here are some hyperlinks to internet sites that we link to mainly because we consider they may be really worth visiting[…]

Leave a Reply

Want to join the discussion?Feel free to contribute!